▪️ Adding documents to an index

If you are looking for information about adding documents to a plain index please read section about adding data from external storages.

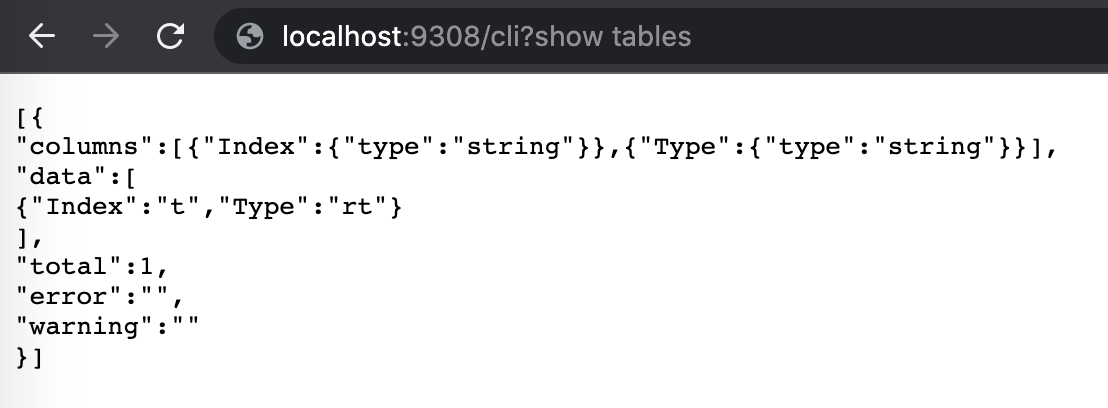

Adding documents in a real-time manner is only supported for Real-Time and percolate indexes. Corresponding SQL command or HTTP endpoint or a client's functions inserts new rows (documents) into an existing index with provided field values.

You can insert new documents with values for all fields of the index or only part of them. In this case the other fields will be filled with their default values (0 for scalar types, empty string for text types).

Expressions are not currently supported in INSERT and the values should be explicitly specified.

The ID field/value can be omitted as RT index supports auto-id functionality. You can use "0" as the id value to force automatic ID generation. Rows with duplicate IDs will not be overwritten by INSERT. You can use REPLACE for that.

- SQL

- HTTP

- PHP

- Python

- Javascript

- Java

General syntax:

INSERT INTO <index name> [(column, ...)]

VALUES (value, ...)

[, (...)]INSERT INTO products(title,price) VALUES ('Crossbody Bag with Tassel', 19.85);

INSERT INTO products(title) VALUES ('Crossbody Bag with Tassel');

INSERT INTO products VALUES (0,'Yellow bag', 4.95);Query OK, 1 rows affected (0.00 sec)

Query OK, 1 rows affected (0.00 sec)

Query OK, 1 rows affected (0.00 sec)There is an auto ID generation functionality for column ID of documents inserted or replaced into an real-time or a Percolate index. The generator produces a unique ID of a document with some guarantees and should not be considered an auto-incremented ID.

The value of ID generated is guaranteed to be unique under the following conditions:

- server_id value of the current server is in range of 0 to 127 and is unique among nodes in the cluster or it uses the default value generated from MAC address as a seed

- system time does not change for the Manticore node between server restarts

- auto ID is generated fewer than 16 million times per second between search server restarts

The auto ID generator creates 64 bit integer for a document ID and uses the following schema:

- 0 to 23 bits is a counter that gets incremented on every call to auto ID generator

- 24 to 55 bits is a unix timestamp of the server start

- 56 to 63 bits is a server_id

This schema allows to be sure that the generated ID is unique among all nodes at the cluster and that data inserted into different cluster nodes does not create collisions between the nodes.

That is why the first ID from the generator used for auto ID is NOT 1 but a larger number. Also documents stream inserted into an index might have not sequential ID values if inserts into other indexes happen between the calls as the ID generator is single in the server and shared between all its indexes.

- SQL

- HTTP

- PHP

- Python

- Javascript

- Java

INSERT INTO products(title,price) VALUES ('Crossbody Bag with Tassel', 19.85);

INSERT INTO products VALUES (0,'Yello bag', 4.95);

select * from products;+---------------------+-----------+---------------------------+

| id | price | title |

+---------------------+-----------+---------------------------+

| 1657860156022587404 | 19.850000 | Crossbody Bag with Tassel |

| 1657860156022587405 | 4.950000 | Yello bag |

+---------------------+-----------+---------------------------+You can insert into a real-time index not just a single document, but as many as you want. It's ok to insert into a real-time index in batches of tens of thousands of documents. What's important to know in this case:

- the larger the batch the higher is the latency of each insert operation

- the larger the batch the higher indexation speed you can expect

- each batch insert operation is considered a single transaction with atomicity guarantee, so you will either have all the new documents in the index at once or in case of a failure none of them will be added

- you might want to increase max_packet_size value to allow bigger batches

- SQL

- HTTP

- PHP

- Python

- Javascript

- Java

For bulk insert just provide more documents in brackets after VALUES(). The syntax is:

INSERT INTO <index name>[(column1, column2, ...)] VALUES ()[,(value1,[value2, ...])]Optional column name list lets you explicitly specify values for some of the columns present in the index. All the other columns will be filled with their default values (0 for scalar types, empty string for string types).

For example:

INSERT INTO products(title,price) VALUES ('Crossbody Bag with Tassel', 19.85), ('microfiber sheet set', 19.99), ('Pet Hair Remover Glove', 7.99);Query OK, 3 rows affected (0.01 sec)Expressions are not currently supported in INSERT and values should be explicitly specified.

- SQL

- HTTP

- PHP

- Python

- Javascript

- Java

INSERT INTO products(title, sizes) VALUES('shoes', (40,41,42,43));JSON value can be inserted as as an escaped string (via SQL, HTTP, PHP) or as a JSON object (via HTTP).

- SQL

- HTTP

- PHP

- Python

- Javascript

- Java

INSERT INTO products VALUES (1, 'shoes', '{"size": 41, "color": "red"}');